With our new DxOMark Mobile protocol, we can now test and score the additional features enabled by the dual-camera system of the Apple iPhone 7 Plus, and compare it fairly to the iPhone 7 and other flagship mobile devices. We published a review of the iPhone 7 Plus using our new protocol, and re-tested the Apple iPhone 7 with it as well. In this article, we’ll highlight how we tested the iPhone 7 Plus’s additional Telephoto and Portrait features, and how they contribute to its higher overall score.

iPhone 7 Family: Recapping features common to both models

With the launch of the iPhone 7 and 7 Plus, Apple improved its already excellent cameras from the previous generation, and added a variety of new features that it hopes will continue to make the iPhone the world’s most popular camera. While some of the new features are reserved for the 7 Plus, both cameras have received plenty of upgrades – including a brighter lens, improved image processing, four-element flash, and optical image stabilization (OIS).The primary rear camera for both the iPhone 7 and 7 Plus is a 12MP, 1/3-inch sensor with a wide-angle (28mm equivalent) f/1.8 lens that features OIS. Both phones also use a new, wider color gamut, making for richer colors when used with Apple or other high-end displays that support the DCI-P3 standard. Apple is also proud of its new algorithms running on a beefy new image processor that melds together several images to create the best possible result. Based on our tests, we’ll show you how the new technologies measure up.

The iPhone 7 and 7 Plus offer great exposures with wide dynamic range, accurate white balance and color rendering, as well as good detail preservation when shooting outdoors in bright daylight. This has garnered both models excellent photo sub-scores, with the only weaknesses showing up in low light — a loss of very fine detail, some focusing irregularities in all lighting conditions, and some visible luminance noise.

Remarkable stabilization in video mode, together with fast and smooth autofocus, nice details, and vivid color in bright light, produced a high Video sub-score of 76 points for both phone models.

iPhone 7 Plus: The second camera makes the difference

The iPhone 7 Plus features additional capabilities based on its dual-camera architecture. It uses the second camera for a 2x optical zoom and computed depth information. Its new Portrait mode is designed to use the depth information gleaned from analyzing the images from both of its cameras to selectively blur image backgrounds while keeping the foreground subject sharp. This is intended to mimic the shallow depth-of-field effect and pleasing bokeh that photographers who use high-end standalone cameras can achieve.

Since the difference between the two phones is the iPhone 7 Plus’s second rear camera, and its use for telephoto (Zoom) images and computational bokeh (depth effect, seen in Portrait mode), we’ll start our comparison with an overview of how the additional camera works, as well as its strengths and weaknesses.

Dual-camera specification on the iPhone 7 Plus

The additional rear camera on the iPhone 7 Plus is quite a bit different from its primary camera. It maintains the 12MP resolution, but features a longer focal length lens (56mm equivalent), which in turn drives the need for a slightly smaller aperture (f/2.8), as well as a smaller sensor size (about 2/3 as large) to kep the iPhone 7 Plus as thin as the standard iPhone 7. In turn, the smaller sensor size means that the pixels on the second camera are also slightly smaller (1 micron versus 1.22 microns on the primary camera), and therefore more susceptible to noise.

Along with the second rear camera comes a new 2x telephoto mode that provides optical zoom capability. In addition, when the phone’s camera app is set to Portrait mode, the phone uses both cameras to create a simulated bokeh (depth effect).

Understanding the iPhone 7 Plus’s optical zoom

Lack of a true optical zoom has been a glaring limitation of smartphone photography. Virtually all smartphone cameras are equipped with a relatively wide-angle fixed focal-length lens, which means if you want to take a closeup of a subject, you need to either move closer to the subject or zoom in digitally (i.e., crop to compose). The iPhone 7 Plus introduced a way to shift the camera from wide-angle to a true telephoto mode while zooming.

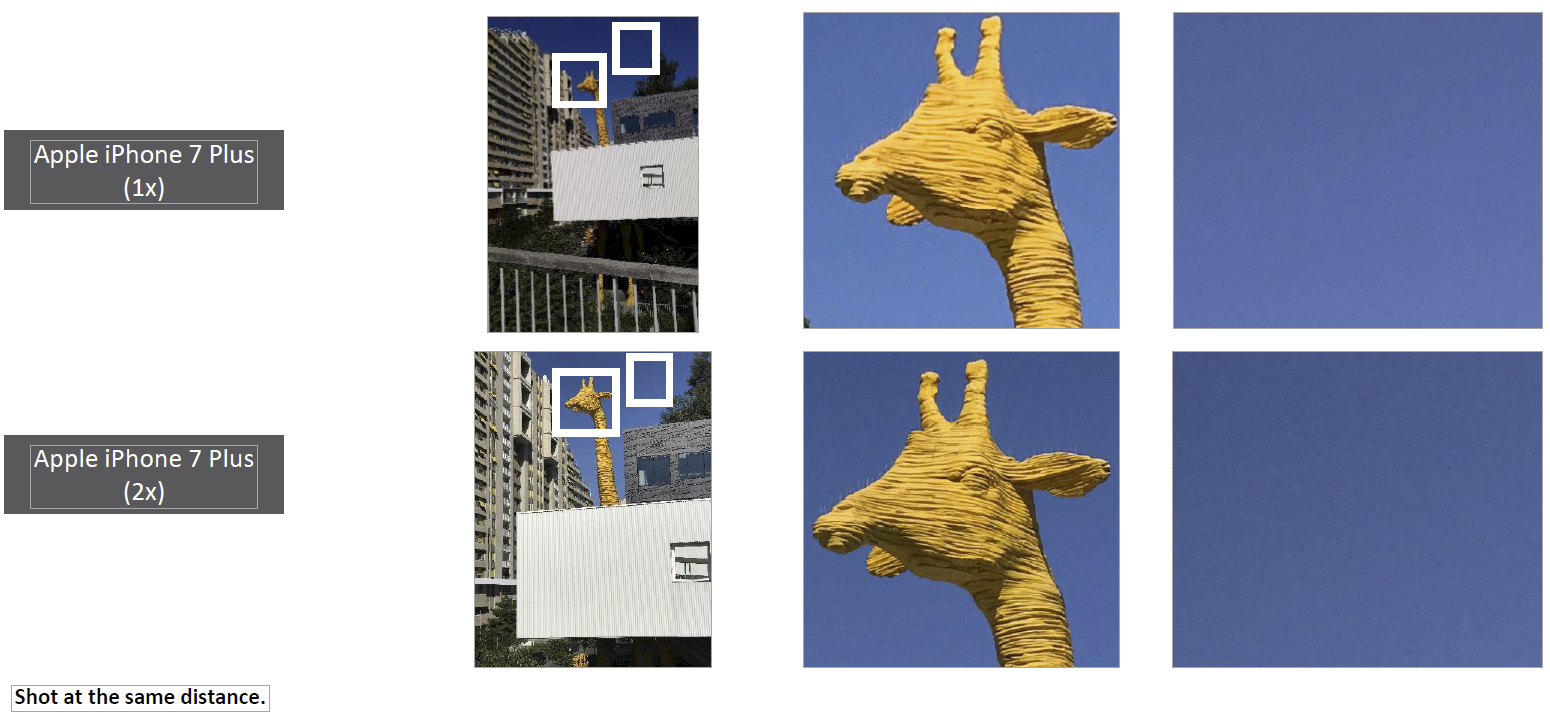

While not technically a zoom lens, the longer focal length of its second lens gives the iPhone 7 Plus the ability to capture native images at a 2x zoom (56mm full-frame equivalent as opposed to 28mm). This implementation provides some important benefits. Using optics for tighter composition typically produces a more detailed image than can be obtained by digitally zooming, which simply crops the image from the wide-angle camera. In addition, longer focal lengths allow the subject to be positioned further away from the camera, which reduces such deformations as unnaturally large noses often seen in portraits shot with wide-angle lenses relatively close to the subject.

The following sample images were captured in 1x and 2x modes:

If we attempt to recreate the 2x zoom digitally (as with the iPhone 7, for example), the resulting image is not nearly as sharp as the photo on the right taken with the iPhone 7 Plus’s true optical zoom. The image – shot under bright light at 2x zoom – also shows that the iPhone 7 Plus captures more details than either the iPhone 7 or the Google Pixel.

Scoring Zoom on the iPhone 7 and 7 Plus

We’ve seen that the longer focal length of the lens on the 7 Plus provides more details in telephoto images captured in bright light. However, there are trade-offs inherent in Apple’s dual-camera design, because the secondary camera has a smaller sensor and slower lens (smaller aperture) than the primary camera. It also lacks Optical Image Stabilization — a design decision that was likely made to accommodate the longer focal length 56mm (full-frame equivalent) lens that has to fit within the shallow depth of the iPhone.

In low light, the iPhone 7 Plus uses its main camera even when zoomed in, so its results are similar to the iPhone 7’s.

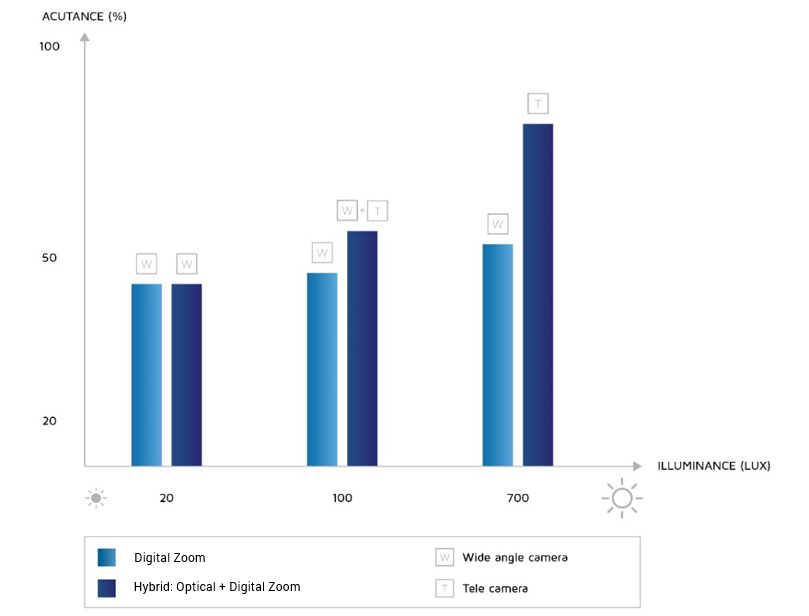

To evaluate how well these trade-offs succeed, we used our new Zoom protocol to test both devices at a variety of focal lengths. Our testing uses varied lighting conditions ranging from very low to bright light, to provide an understanding of how the devices perform in real-world situations. What we found was that in bright light the 7 Plus dual cam offered a significant advantage. In dim light (for example, the 100 Lux measurement in the chart), the 7 Plus had a slight advantage in detail, while in low light (for example, the 20 Lux measurement), the cameras essentially performed identically. That is because in low light, the 7 Plus relies on its traditional wide-angle camera, so the images produced are very similar the ones taken with the iPhone 7, and lose detail compared to those from the Google Pixel.

Optical zoom comes at a slight cost in noise

The disadvantage of the increased detail from the telephoto lens is a slight increase in noise due to the smaller pixel size.

We compare zooms by setting the camera zoom to a 50mm and 85mm full-frame equivalent field of view by using whatever zoom method the camera supports (optical, digital, or hybrid) so that the full target is framed. We then evaluate how a specific area of the target is rendered by each camera by measuring sharpness in object space. This means that we evaluate the detail on a subject of interest at a fixed distance from the photographer.

As expected, the additional camera with telephoto lens contributes to the higher Zoom sub-score for the iPhone 7 Plus. However, while the telephoto lens on the second camera of the 7 Plus is a win in good lighting, challenges in combining images from the two cameras and the use of the main camera in low light mean that it scores only 9 points higher — 46 versus 35 — for Zoom than the iPhone 7. While that is a noticeable difference, it doesn’t put the Plus much ahead of the Nokia 808 PureView, which scored 42 using a different approach with its very large 41MP sensor and cropping.

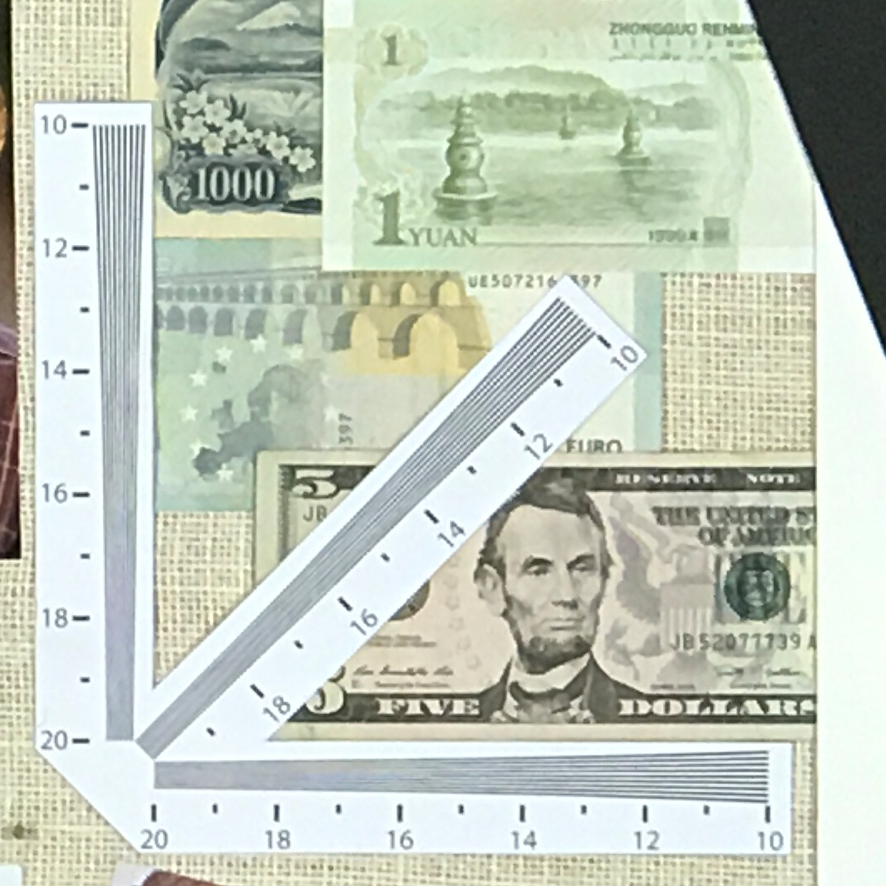

There is also still quite a bit of room for improvement in smartphone Zoom capabilities when we compare them to what is possible with a standalone camera, as shown in these crops from Zoom test images:

Scoring Bokeh on the iPhone 7 Plus (Portrait mode)

A major differentiator of high-end standalone cameras such as DSLRs, when compared to smartphones, is their ability to let the photographer create images where the subject is razor-sharp, and the background is pleasantly blurred. Typically, this shallow depth-of-field effect is achieved by coupling a wide-aperture lens with a large sensor.

Conversely, smartphones have very small sensors, so even those with relatively fast (wide-aperture) lenses are incapable of creating images with a shallow depth of field and blurred background. Various desktop software tools can be employed to achieve a simulation of this effect during post-processing, but they require a considerable amount of manual intervention to select the subject area, then determine how much blur to apply. The goal of Apple’s new Portrait mode, and specifically of its Depth Effect, is to automatically mimic the results that can be obtained by DSLRs at the time of capture.

Depth Effect: Partial simulation of shallow depth of field

The iPhone 7 Plus can’t actually create the effect of depth with its optics because of its small sensors and short focal length lenses. However, by using images taken simultaneously with its dual cameras, it can in fact create a simulated depth effect in real time. The iPhone first estimates the distance of each object in the scene, after which it calculates exactly how much blur to apply to each portion of the image, with more blur applied to those objects determined to be further away, while keeping objects on or near the plane of focus sharp. Apple appears to have deliberately chosen to reduce the effect on foreground objects, most likely to prevent artifacts.

Technical Note: There are a variety of ways that a shallow depth of field can be simulated in software, but some of them begin by estimating the distances to objects in the scene to create a depth map. Because its two cameras are offset, each with a slightly different view of the scene, the iPhone 7 Plus is able to use the relative shift of objects between them, or parallax, to estimate how far away elements in the scene are from the camera itself. This technique is employed when the camera app is set to Portrait mode, which is when the Depth Effect is activated.

The iPhone 7 Plus creates a blur intensity roughly equivalent to a full-frame DSLR with a lens opened to f/3.5, as shown by this comparison:

Depth Effect drawbacks: Handling noise, details, and low-light shooting

Because the depth map is only an approximation, artifacts are created in areas with fine details in areas of depth transition, as illustrated by this comparison of the optical blurring from a full-frame DSLR with the synthetic effects created by the iPhone 7 Plus:

Shooting in low light also causes issues when the iPhone 7 Plus is set to Portrait mode. The smaller-aperture telephoto lens on the iPhone 7 Plus is much slower than the primary lens, which would normally be balanced by a slower shutter speed. However, unlike the primary wide-angle lens, the telephoto lens is not stabilized, so the iPhone isn’t able to achieve proper exposure for portraits taken in low-light scenes.

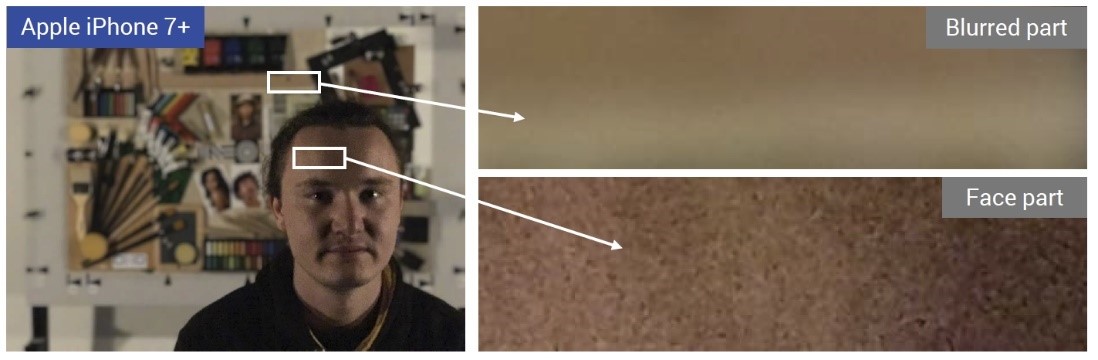

Noisy areas can also look artificial when the Depth Effect is activated in low-light conditions. This happens because the camera’s simulated bokeh also reduces the noise in those areas.

Unlike the true shallow depth-of-field look achieved with a DSLR, images taken using the iPhone’s Portrait mode exhibit different degrees of noise both in the subject (foreground) and background portions of the image. In bright light, noise is typically not very noticeable, but in low light the inconsistent rendering of noise can be quite distracting, as exemplified in the following image:

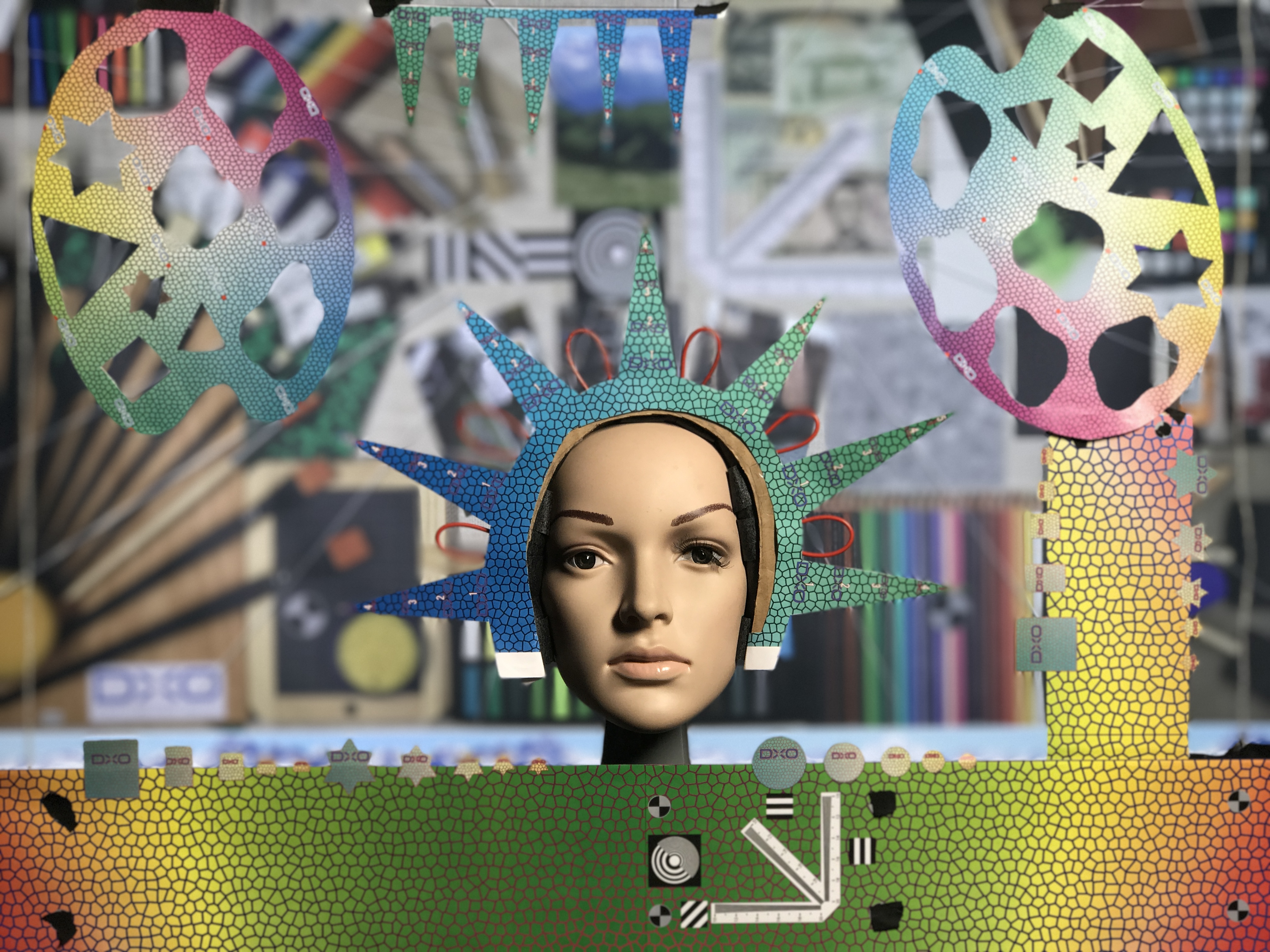

Measuring the accuracy of the Depth Effect used for Bokeh

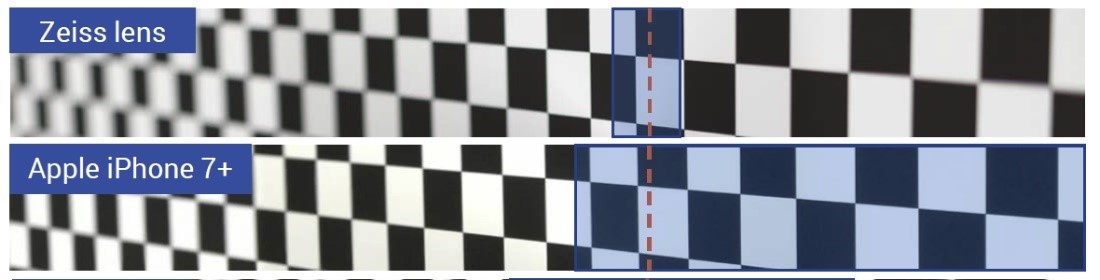

Another issue in evaluating Bokeh is how well the subject in an image is isolated – which helps attract attention to it. Physically shallow depth-of-focus lenses do this automatically because of their optical properties. The iPhone 7 Plus can simulate this Depth Effect when you enable Portrait mode. However, this simulation blurs foreground objects much less than background objects. This is probably intentional on Apple’s part, and intended to reduce the possibility of visible artifacts in the foreground. To illustrate, here is an image of a test chart set at a 45-degree angle to the camera. The right side is much closer than the left, and the cameras are both focused on the center. The full-frame DSLR with a 50mm lens blurs both near and far, while the iPhone 7 Plus blurs only the portion of the chart that is farther away:

The blue highlights show the in-focus areas of these comparison images. The phone blurs only the background, while the Zeiss lens blurs both foreground and background. To get the phone to blur the foreground, it needs to be quite far from the subject.

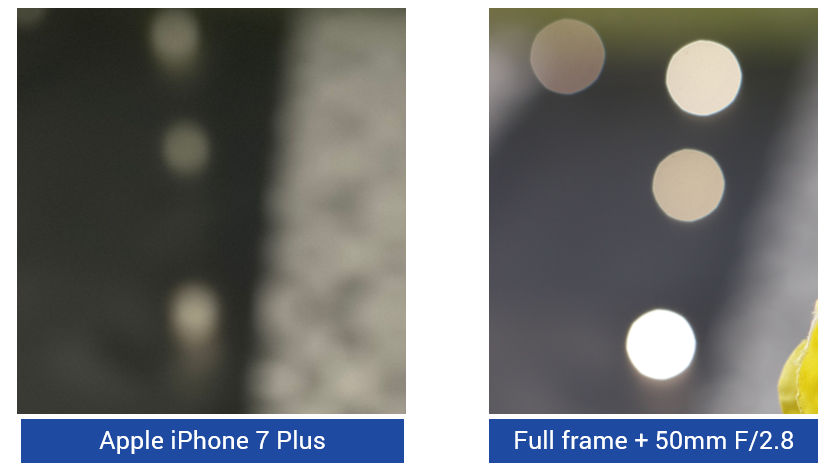

Testing bokeh quality

In addition to the amount of blur in the background of an image, there is also a subtle but important subjective quality to the shape and brightness of the blurred areas (or bokeh) that is particularly noticeable in blurred highlights. Camera lenses that provide a pleasing blur are said to have excellent bokeh. So when the blur effect is calculated after the fact in software, such as with the iPhone 7 Plus and several other competing smartphones, we need to evaluate how well the camera app can mimic the physical blurring that would have been created using an actual shallow depth-of-field lens. In our lab, we evaluated the bokeh of the iPhone 7 Plus compared to a high-end lens on a full-frame DSLR to see how it compares:

The full-frame DSLR sensor and lens generate a much more traditional bokeh even when stopped down to f/4, such as in this comparison image:

The shape of bokeh helps determine whether a photographer finds it pleasing. The modulation of the intensity within the shape is also important. Nice bokeh are slightly brighter around the edges, and the edges are pretty sharp.

TIP: If you want to learn more about bokeh (pronounced bōˈkā) and how it can be a powerful tool in your photography, we recommend some informative online resources, such as Nikon’s article on Bokeh for beginners, and Luminous Landscape’s more technical piece that goes deeper into the optics. As you’d expect, there is also a fairly detailed overview in Wikipedia.

The quality of iPhone 7 Plus-generated bokeh

The iPhone 7 Plus generates a smooth bokeh, which is generally less desirable than the sharper edge typical of excellent optical bokeh. To successfully generate bokeh, Apple recommends keeping the subject within 2.5 meters. Beyond that distance, the two cameras can’t discern enough parallax for them to correctly generate the proper blur effect.

The iPhone 7 Plus’s telephoto lens can also help avoid deformation

An advantage of using a longer focal length is that it reduces the foreshortening distortion that comes with using a wide-angle lens to photograph nearby subjects. This deformation is especially apparent in portraits of people. The sample image below illustrates the type of deformation of the face that results from a full-frame head shot as taken with the wide-angle lens on the iPhone 7 (or any traditional smartphone, for that matter).

This effect can be minimized by moving further back, away from the subject, but that necessitates the use of digital zoom (cropping) to recreate the close-up composition, which in turn decreases details in the image. The telephoto lens on the iPhone 7 Plus, on the other hand, provides the best of both worlds – it minimizes deformation and distortion while retaining details. This helps the iPhone 7 Plus achieve a superior Bokeh sub-score of 50, which is the highest of any mobile device we have tested, and a substantial improvement over the 25 scored by the single-camera iPhone 7.

The combination of the ability to blur background objects, the quality of the resulting bokeh, and the preservation of proper shape of the subject are rolled up into our new Bokeh sub-score. In this area, as with Zoom, the iPhone 7 Plus’s second camera provides substantial benefits, explaining its higher Bokeh score.

Summary: The iPhone 7 Plus’s dual camera pays off, especially for portraits

While the two phones share similar scores for Video as well as for Photo sub-scores for Exposure and Contrast, Color, Autofocus, Texture, Noise, Artifacts, and Flash, the Plus delivers dramatic improvements in our new Zoom and Bokeh sub-scores. A combination of better-quality telephoto images in good light, reduced deformation, and a generally pleasing depth effect put the iPhone 7 Plus squarely ahead of the iPhone 7 in terms of image quality for those doing portrait, macro, or sports photography. You can see here how the differences show up in our new DxoMark Mobile Photo scoring for the two phones:

DXOMARK encourages its readers to share comments on the articles. To read or post comments, Disqus cookies are required. Change your Cookies Preferences and read more about our Comment Policy.